What is Photogrammetry?

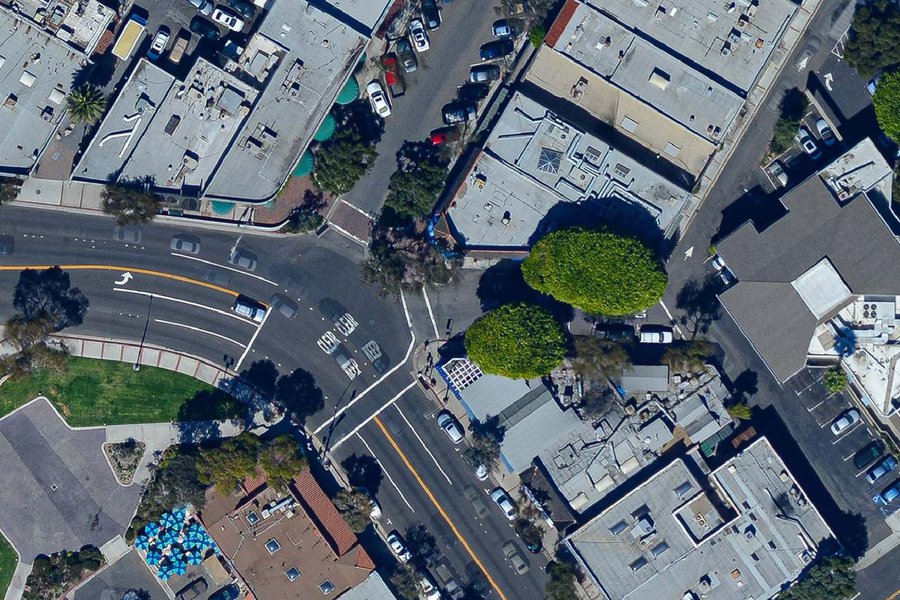

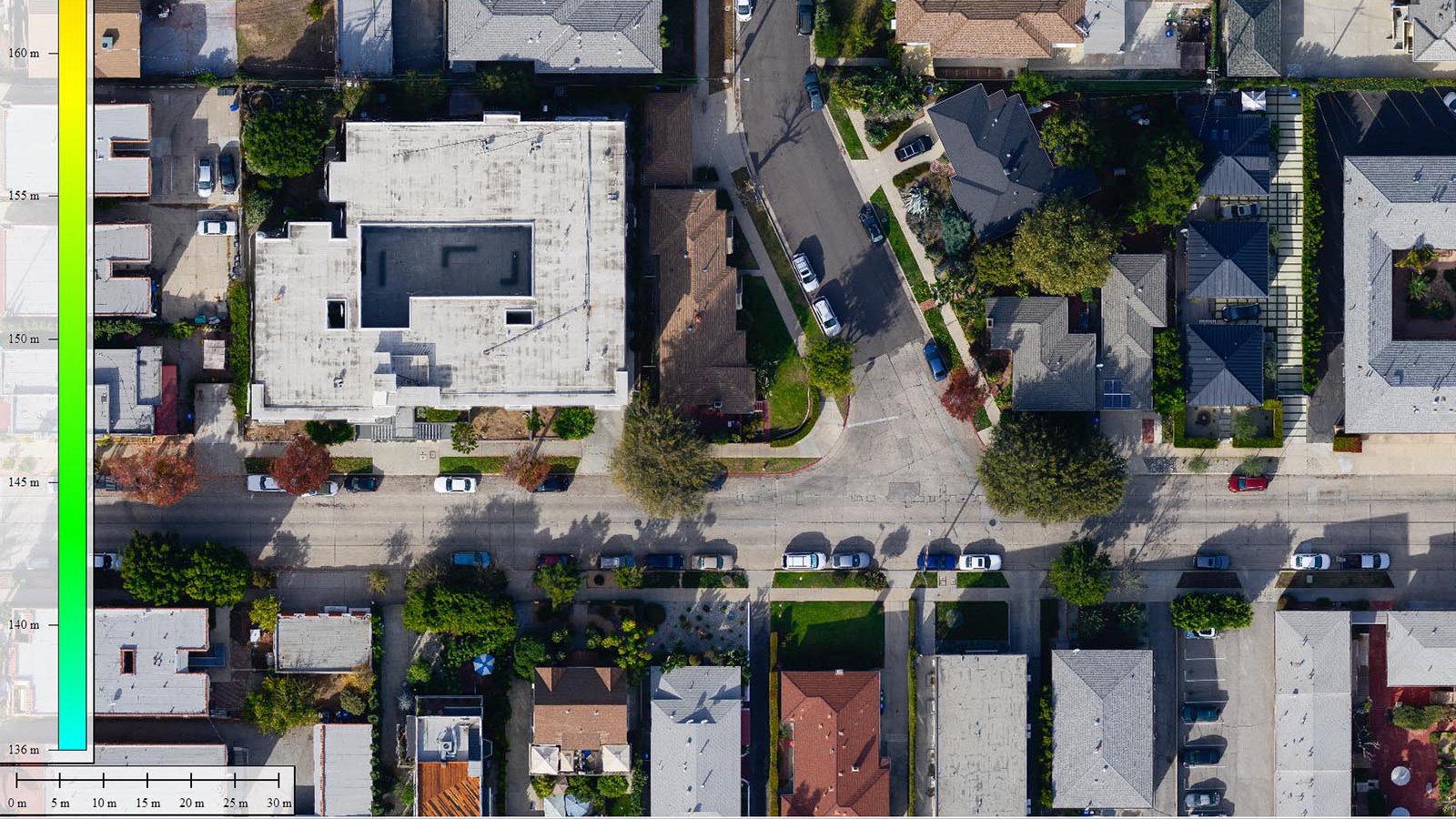

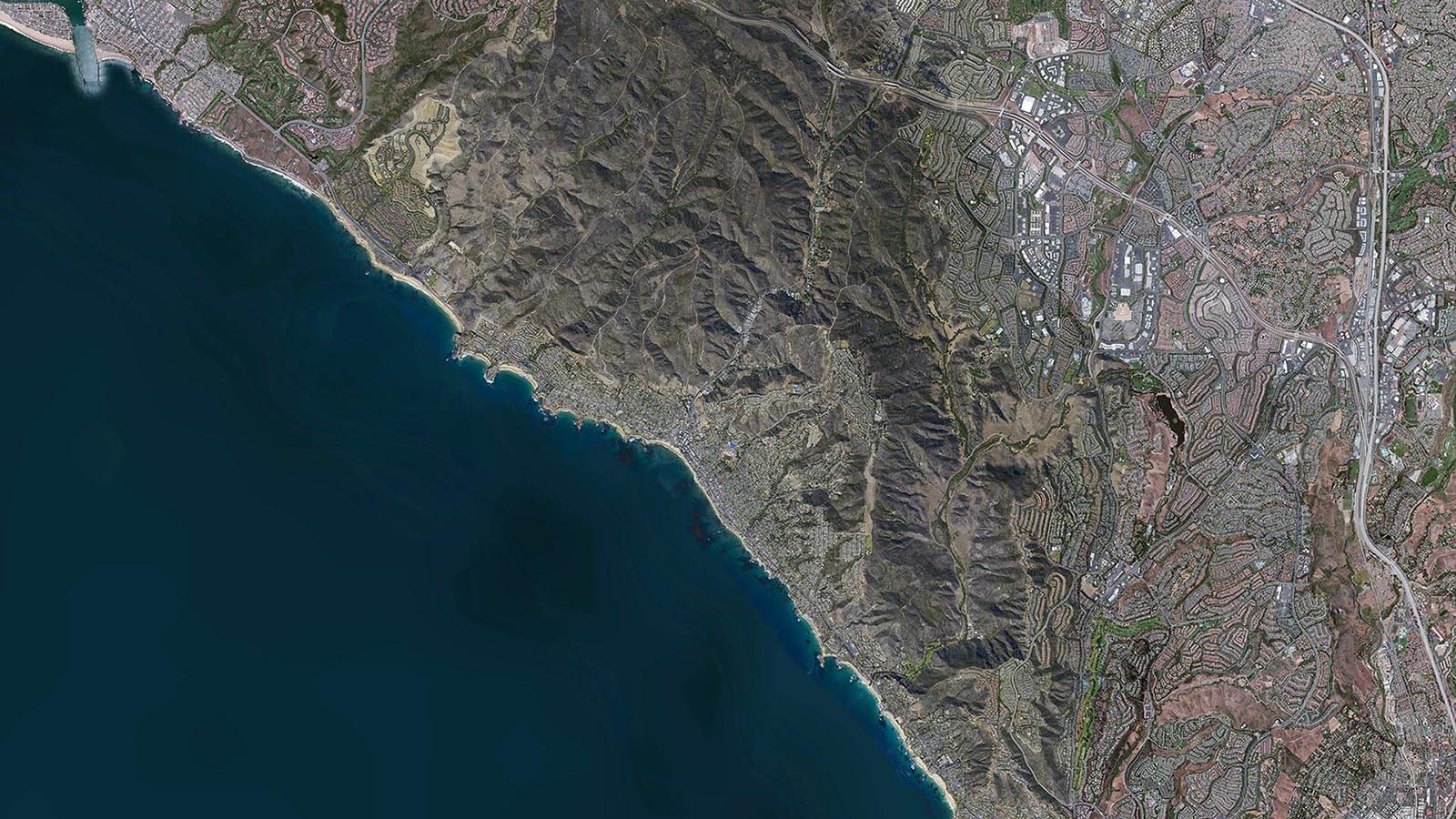

Photogrammetry is the one of the most widely and technically accepted means for creating maps. Utilizing a camera mounted on an airplane that is pointed towards the ground, operators take multiple overlapping photos. These photographs are then aligned and processed together into a seamless image, often called an orthophoto or orthomosaic, which have been shown to be incredibly accurate and cost-effective. New photogrammetric methods also allow for the creation of elevation models in addition to the orthophoto, allowing for more data to be generated from the same data set.

Orthophoto of Laguna Beach, California

Photogrammetry and Animation

One of the biggest changes in photogrammetry is the ability to quickly and cost-effectively generate dense 3D models of large areas. This can be used in all sorts of applications, but some of the most exciting are cinematic special effects and video game environment creation. The ability to quickly acquire and generate a 3D model and allow it to be imported for animation/cinema, greatly increases the ability and speed in creating virtual worlds and digital scenes.

More than Just a Picture

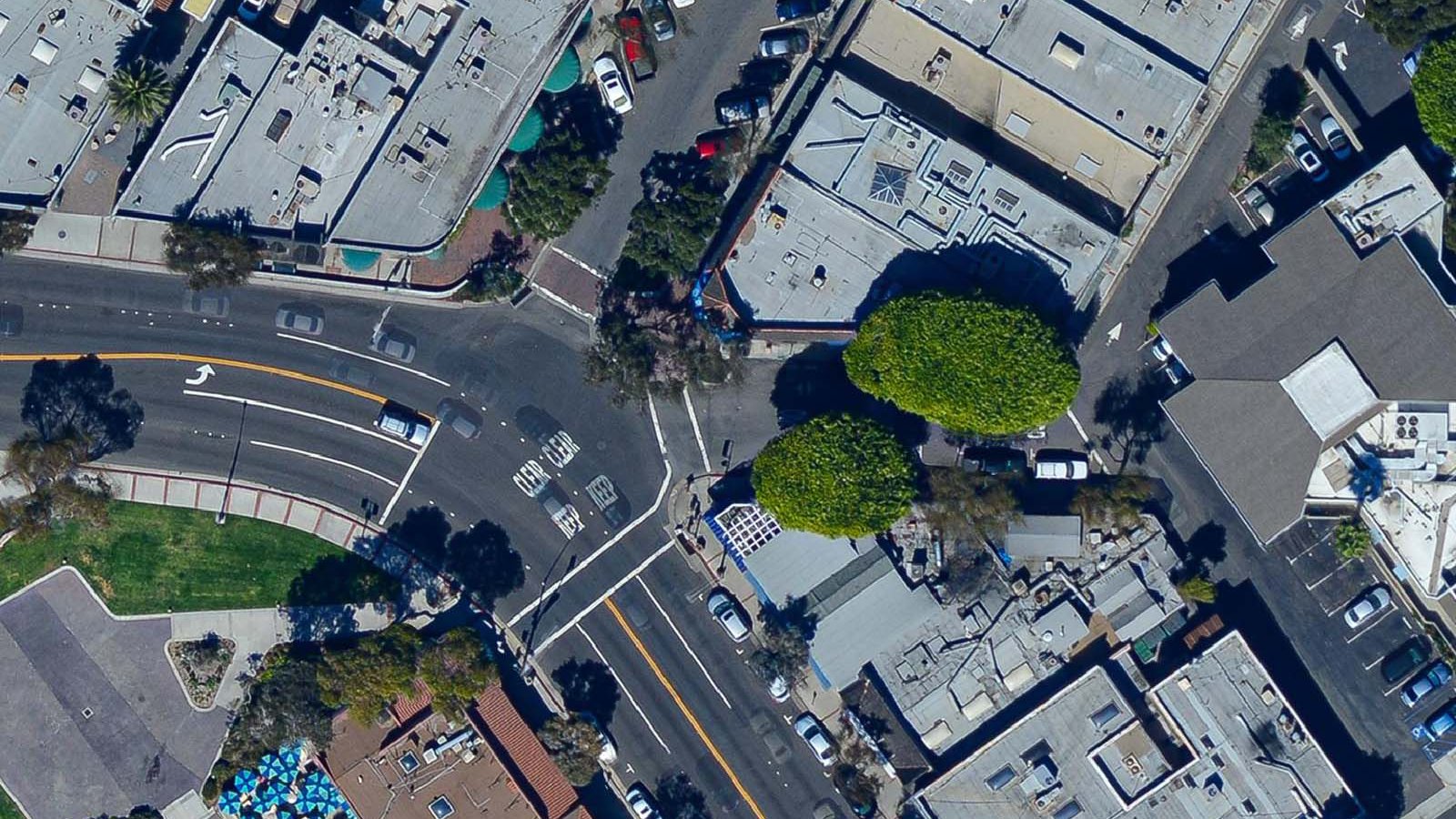

While it may look like a single image, an orthophoto is a mosaic of multiple images, often hundreds or thousands of distinct images, that are combined and orthorectified (correcting for distortions that are inherent in photographs). The primary distortion in non-orthorectified images is relief displacement, most commonly seen as building tilt.

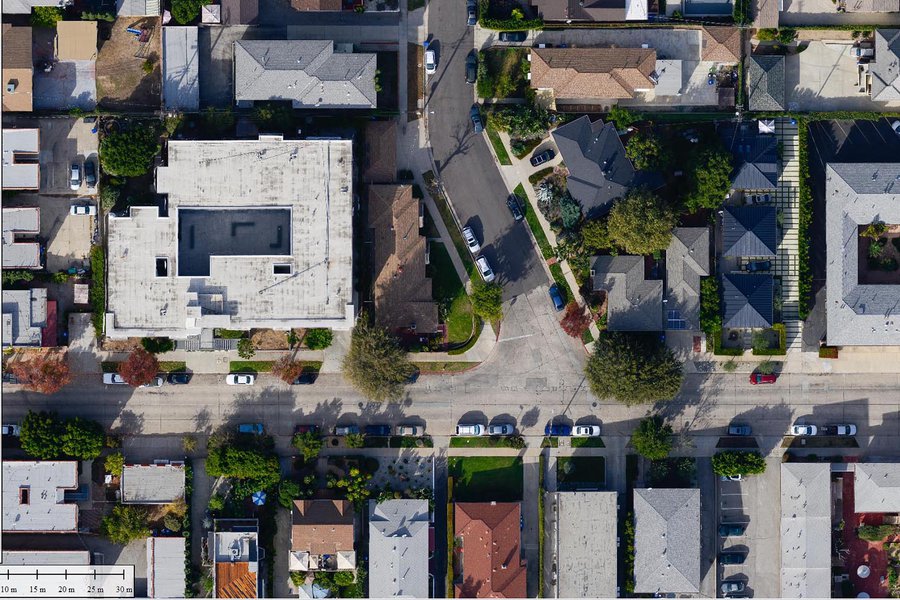

As seen in the image below, the data set on the right does not correct for relief displacement (building tilt) like the data set on the left. While it may seem superficial, the accuracy of the underlying map could be seriously impacted if these distortions are left uncorrected, as drastic elevation changes in the terrain can lead to inconsistencies in the map.

Relief displacement, or building tilt, comparison between two different data sets

Not All Data is Equal

One of the major advancements in photography has been the improved optics and clarity in lenses. As the cameras pack in more megapixels, the main bottleneck is often the lens, as older lenses weren't designed for the increasingly high demands of today's high-megapixel cameras. These technological advances come to general photography at a much faster rate than specialty applications like photogrammetry, which requires very specific metric lenses. The ability to incorporate these newer non-metric lenses into photogrammetry, which has been enabled by new technologies, has allowed for these new, incredibly sharp non-metric lenses to be used to capture photogrammetric data sets with great results.

But why is this important?

The two samples below are of identical specifications, both are 3-inch (7.5cm) ground sample distance (GSD). The difference in the level of detail is very noticeable, however, as there is much more detail available in the image on the left versus the image on the right. This is due to the improved optics in the lens used. The lens on the right is a metric lens that has historically been used for mapping applications, while the lens on the left is a very clear prime lens used in general photography.

New Photogrammetric Technologies

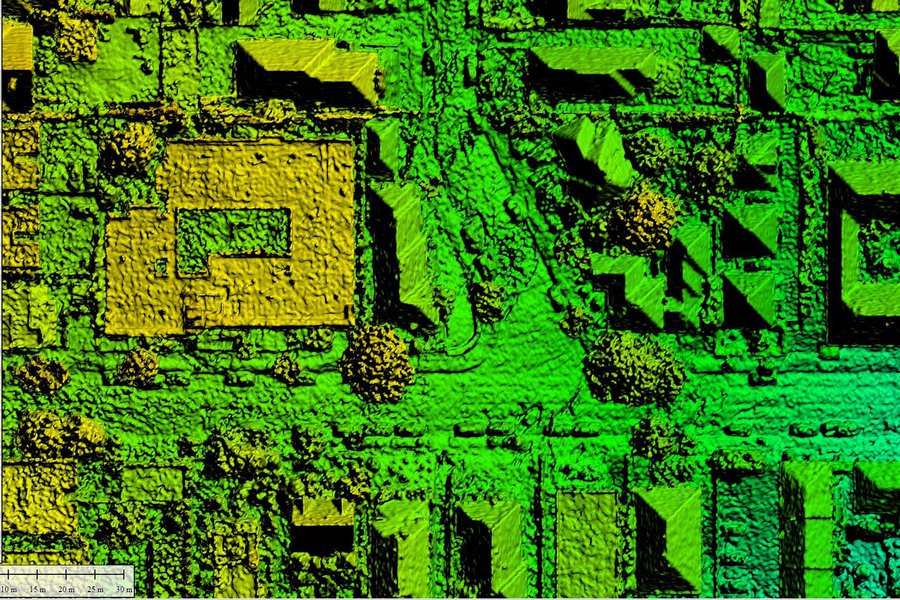

With the ever-increasing advances in technology, it was inevitable that the field of photogrammetry would ultimately benefit. One of the new methods that has been very exciting is called Surface-from-Motion (SFM), which calculates the movement of pixels between successive images and is able to generate a 3D model. This detailed 3D model is used to help create a true orthophoto, correcting for relief displacement and other artifacts during the creation process. There are multiple benefits to this method, but the primary one is that it allows 3D data to be extracted from existing data sets, eliminating the need for multiple flights. This 3D data has a multitude of uses, such as allowing for the creation of digital terrain models (DTM), contour lines, or generating a digital model of a structure for use in animation or film.

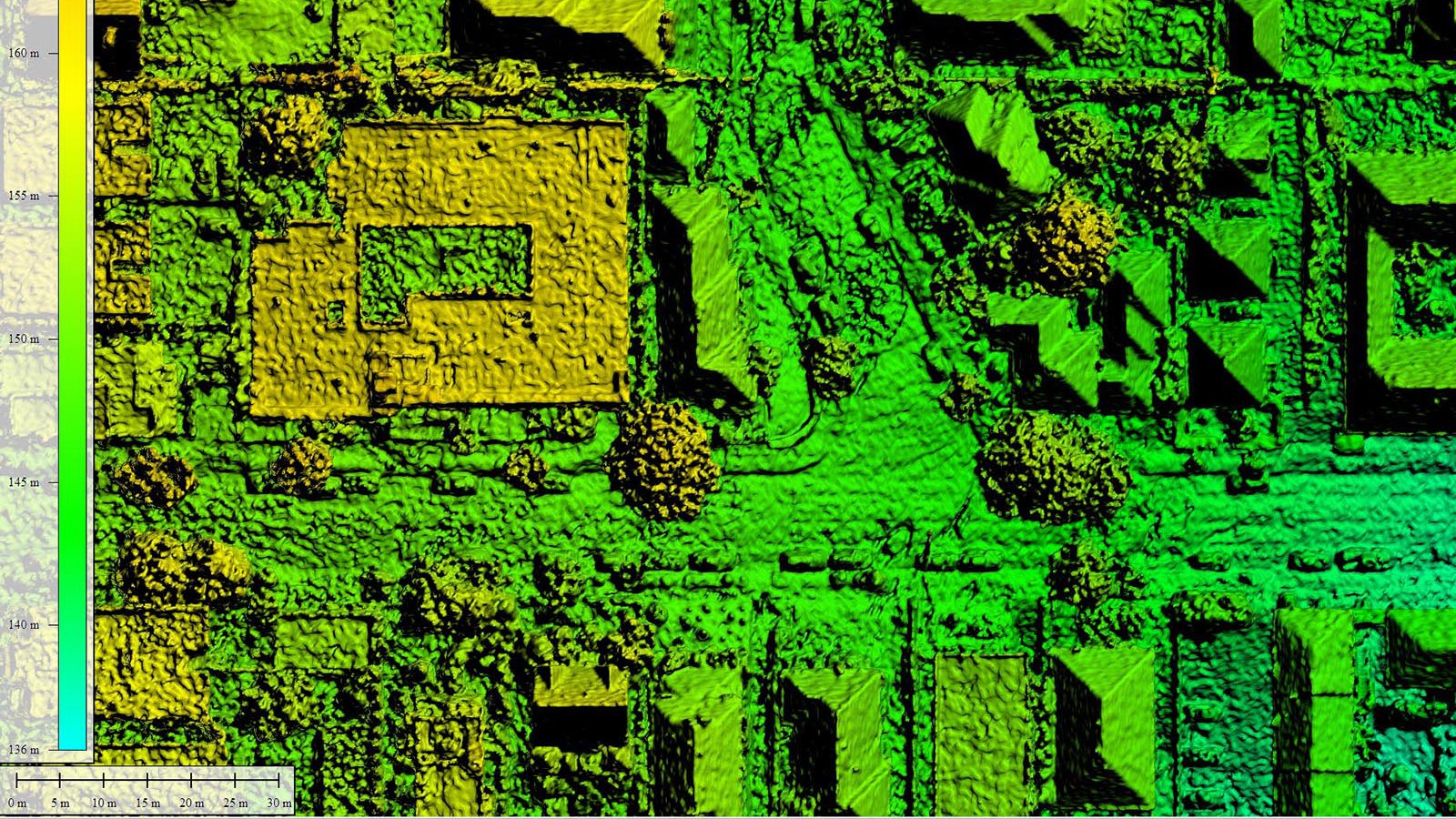

3D Model of a residential neighborhood in Los Angeles, California

Point Cloud

In a point cloud, each pixel is represented with a color value and x, y, and z coordinate, which are combined to create a 3D representation of the project area. While the 3D point cloud may appear more detailed while zoomed out, zooming in reveals the spaces between points. The varying attributes of each point in a point cloud can be categorized and filtered in a variety of different ways, for example, allowing for the vegetation or structures to be toggled on/off, making the point cloud an incredibly robust tool for a variety of real-world applications.

Stereophotogrammetry, the traditional method used to calculate 3D coordinates from two or more photographs, is supercharged by Multi-Viewpoint Stereo (MVS) which allows for a Surface-From-Motion system to create very dense point clouds. Historically, the use of stereo pairs (two photographs with high overlap taken from almost the same location) allowed an operator to manually draw contours and break lines. The MVS method allows the computer to calculate the position of each pixel by first determining a precise camera position and then analyzing how much each pixel moves between successive images. The more a pixel moves between successive photographs in relation to the rest of the pixels in the image, the higher that pixel is. These pixel calculations are used in the generation of a point cloud, which benefits from the much more detailed elevation data (in comparison to contours) and the automation of the process.

3D Color Point Cloud of a residential neighborhood in Los Angeles, California

Digital Elevation Models

The 3D Point Cloud can be processed to create a variety of Digital Elevation Models (DEMs), such as a Digital Surface Model (DSM), which includes natural and built features on the earth's surface, or a Digital Terrain Model (DTM), which filters out any additional features and represents bare earth.